|

This is important there are more variants of Java than there are cereal brands in a modern American store. Export PATH=$PATH:~/.local/binĬhoose a Java version.

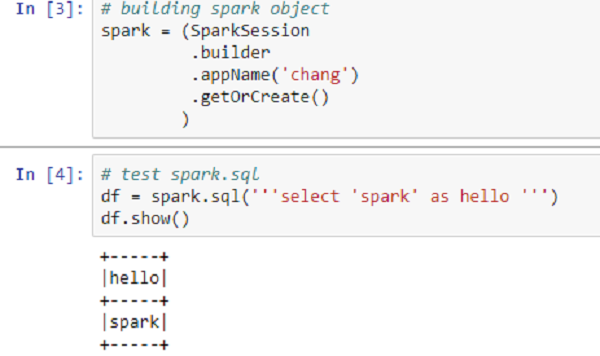

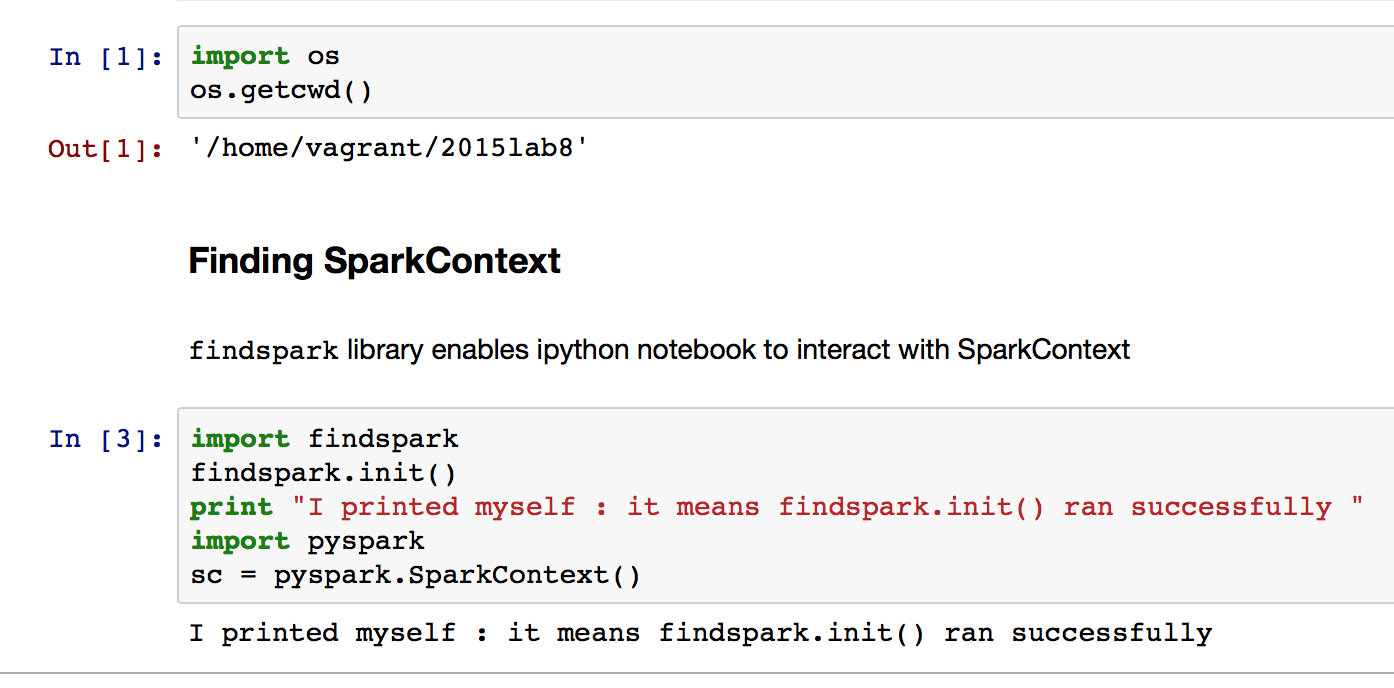

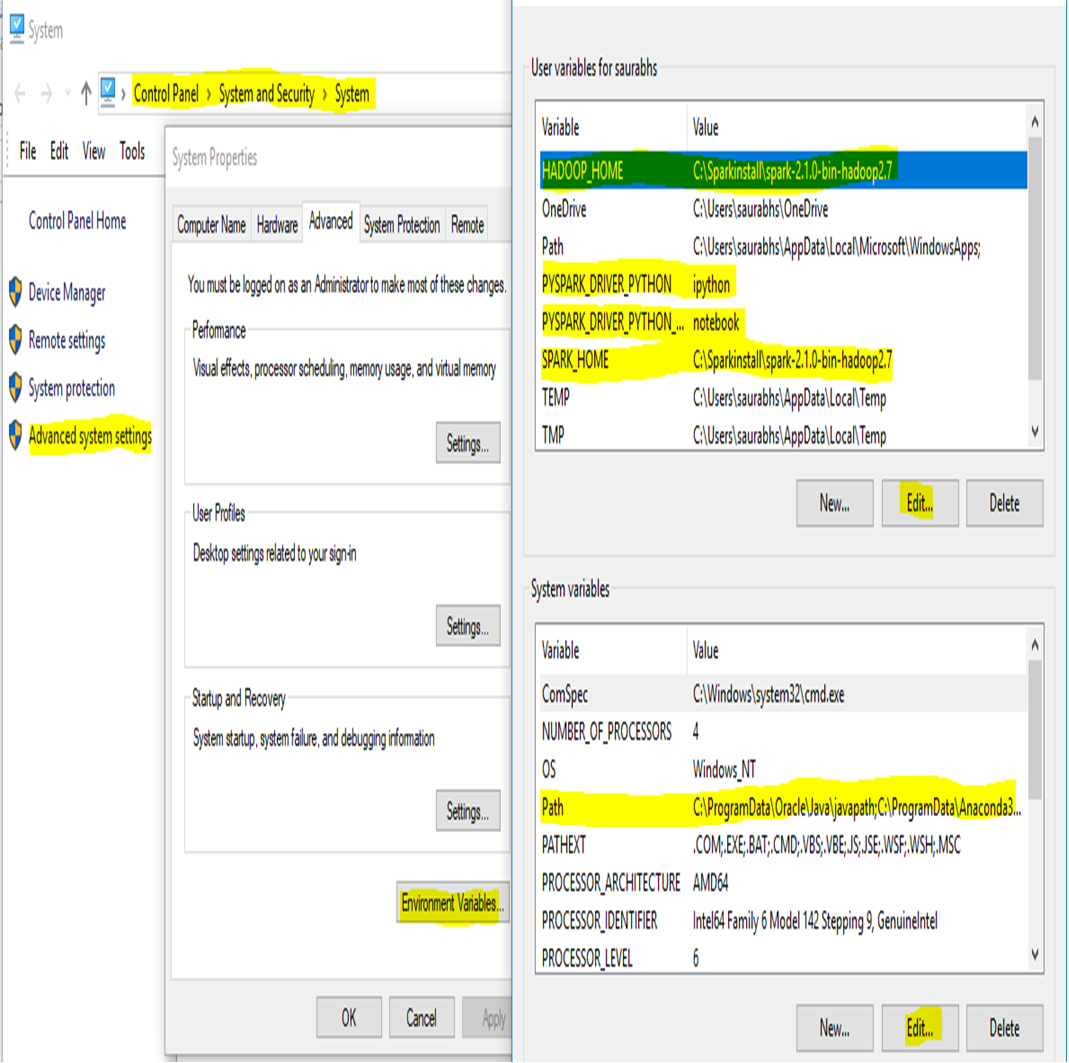

#INSTALL SPARK ON WINDOWS JUPYTER NOTEBOOK PIP PYSPARK INSTALL#Pip3 install jupyterĪugment the PATH variable to launch Jupyter Notebook easily from anywhere. (Earlier Python versions will not work.) python3 -version Python 3.4+ is required for the latest version of PySpark, so make sure you have it installed before continuing. Before you can start with spark and hadoop, you need to make sure you have installed java (vesion. If you're using Windows, you can set up an Ubuntu distro on a Windows machine using Oracle Virtual Box. Install Spark (PySpark) to run in Jupyter Notebook on Windows 1. It is wise to get comfortable with a Linux command-line-based setup process for running and learning Spark. That's because in real life you will almost always run and use Spark on a cluster using a cloud service like AWS or Azure. This tutorial assumes you are using a Linux OS. #INSTALL SPARK ON WINDOWS JUPYTER NOTEBOOK PIP PYSPARK HOW TO#In this brief tutorial, I'll go over, step-by-step, how to set up PySpark and all its dependencies on your system and integrate it with Jupyter Notebook. However, the PySpark+Jupyter combo needs a little bit more love than other popular Python packages. Most users with a Python background take this workflow for granted. However, unlike most Python libraries, starting with PySpark is not as straightforward as pip install and import. It will be much easier to start working with real-life large clusters if you have internalized these concepts beforehand. You can also easily interface with SparkSQL and MLlib for database manipulation and machine learning. You distribute (and replicate) your large dataset in small, fixed chunks over many nodes, then bring the compute engine close to them to make the whole operation parallelized, fault-tolerant, and scalable.īy working with PySpark and Jupyter Notebook, you can learn all these concepts without spending anything. Spark is also versatile enough to work with filesystems other than Hadoop, such as Amazon S3 or Databricks (DBFS).īut the idea is always the same. This presents new concepts like nodes, lazy evaluation, and the transformation-action (or "map and reduce") paradigm of programming. Remember, Spark is not a new programming language you have to learn it is a framework working on top of HDFS. You could also run one on Amazon EC2 if you want more storage and memory. However, if you are proficient in Python/Jupyter and machine learning tasks, it makes perfect sense to start by spinning up a single cluster on your local machine.

Python 2.7 is still widely used however all new support and features are being rolled out with Python 3.6. We recommend installing Jupyter Notebook and Toree with Python 3.6. #INSTALL SPARK ON WINDOWS JUPYTER NOTEBOOK PIP PYSPARK FREE#These options cost money-even to start learning (for example, Amazon EMR is not included in the one-year Free Tier program, unlike EC2 or S3 instances). 2) Then create a virtualenv folder and activate a session: virtualenv -p python3.6 env3 source env3/bin/activate.

0 Comments

Leave a Reply. |

AuthorJavier ArchivesCategories |

- Blog

- How to get the flip clock screensaver on macbook

- Is the house on the cover of bon jovi album real

- Clownfish translator download

- Inotia 2 download free

- Iskysoft dvd creator for windows crack torrent

- Imagenomic portraiture mac torrent

- Raanjhanaa songs pk free download

- Chandramukhi telugu serial episode 1

- File management on mac

- Send sms from mac for android

- How to get jitbit macro recorder free

- Microsoft wireless mouse 3500 driver windows 10

- Find office 2013 product key in registry windows 10

- Cities skylines after dark free update

- Install vmware esxi from usb

- How do you screen print from a mac

- Visual certexam designer

- Western digital my passport 1tb hard drive back up

- How to open -wmv on mac

- D20 modern books pdf

- Email client for windows 7 64 bit

- External fax modem for mac

- Best free video downloader online

- Shaandaar movie download in hd

- Unity 3d games traffic talent

- Avira vs avast cnet

- Better call saul s03 e06 watch

- Macbook pro a1278 2011 motherboard

RSS Feed

RSS Feed